The Great Processing Power Divide

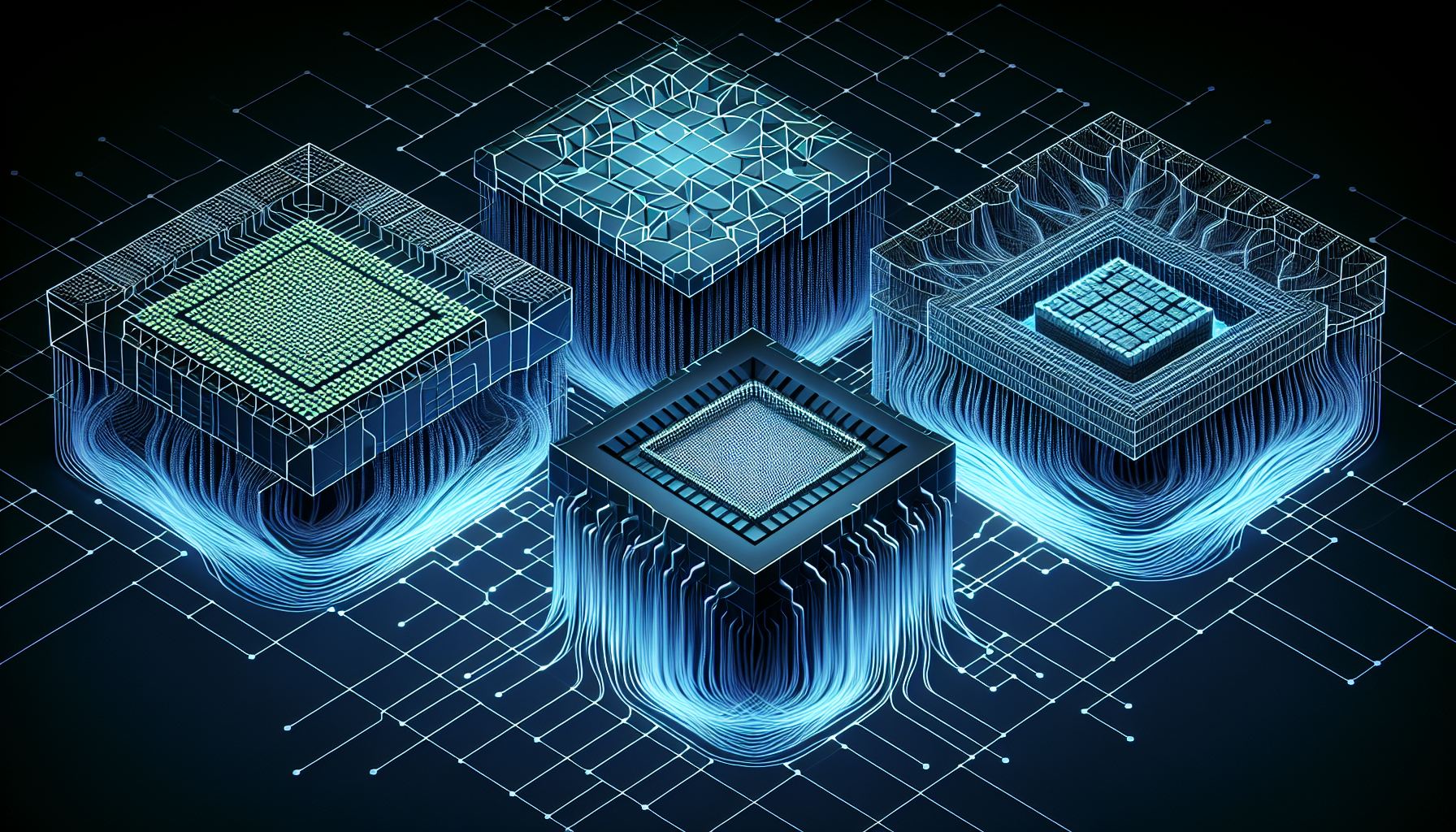

The battle for computational supremacy has evolved beyond simple clock speeds to architectural philosophies that reshape entire industries. As we advance deeper into 2026, the distinction between Central Processing Units (CPUs), Graphics Processing Units (GPUs), and Tensor Processing Units (TPUs) has become more critical than ever for developers, engineers, and organizations seeking optimal performance across diverse computing workloads.

According to recent analysis, these three processor architectures represent fundamentally different approaches to computation, each optimized for specific tasks that define modern technology landscapes. Understanding their architectural trade-offs is essential for making informed decisions about hardware deployment and application design.

CPU Architecture: The Swiss Army Knife of Computing

CPUs remain the cornerstone of general-purpose computing, designed with low-latency logic that excels in sequential processing tasks. Data indicates that CPU architecture prioritizes complex instruction sets and sophisticated branch prediction mechanisms, making them suitable for a wide range of applications that require rapid decision-making and varied computational patterns.

The strength of CPU design lies in its versatility and ability to handle diverse workloads efficiently. Modern CPUs feature multiple cores, typically ranging from 4 to 64 cores in consumer and enterprise systems, with each core optimized for handling complex, interdependent tasks. This architecture proves particularly effective for operating systems, database operations, web servers, and applications requiring frequent context switching.

Research suggests that CPUs maintain their relevance through advanced features like out-of-order execution, speculative execution, and large cache hierarchies. These capabilities enable CPUs to maximize performance even when dealing with unpredictable code paths and memory access patterns that would challenge more specialized processors.

GPU Revolution: Parallel Processing Powerhouses

GPUs represent a paradigm shift toward massive parallel processing, consisting of thousands of cores optimized for concurrent operations. Analysis reveals that modern GPUs can contain anywhere from 2,048 to over 10,000 cores, each designed to handle simpler calculations simultaneously rather than complex sequential operations.

The architectural philosophy of GPUs centers on throughput optimization rather than latency minimization. Data shows that GPUs excel particularly in matrix operations and graphics rendering, making them indispensable for applications involving large-scale mathematical computations. This parallel processing capability has revolutionized fields beyond graphics, including cryptocurrency mining, scientific simulations, and artificial intelligence training.

GPU architecture features specialized memory hierarchies designed to feed thousands of cores efficiently. Modern GPUs incorporate high-bandwidth memory (HBM) and sophisticated memory controllers that can deliver several terabytes per second of memory bandwidth, far exceeding traditional CPU memory systems. This architectural choice enables GPUs to process massive datasets that would bottleneck CPU-based systems.

TPU Specialization: Google's Neural Network Accelerators

Tensor Processing Units represent the newest evolution in processor design, developed by Google as specialized hardware accelerators specifically for artificial intelligence workloads. Research indicates that TPUs feature systolic arrays that are particularly efficient for neural network training and inference operations.

The systolic array architecture of TPUs creates a grid of processing elements that pass data through in a highly coordinated manner, optimizing the matrix multiplication operations that form the backbone of neural network computations. Analysis suggests that this specialized design can deliver 15 to 30 times better performance per watt compared to traditional processors for AI workloads.

TPUs demonstrate how purpose-built hardware can achieve dramatic efficiency gains when architectural design aligns precisely with specific computational patterns. The data flow architecture in TPUs minimizes memory access overhead and maximizes computational throughput for the mathematical operations most common in machine learning applications.

Modern Authentication and Observability Integration

Beyond raw processing power, modern computing architectures must integrate seamlessly with contemporary security and monitoring frameworks. The analysis reveals that Proof Key for Code Exchange (PKCE) has emerged as a critical authentication standard for web and mobile applications, enhancing security by preventing intercepted authorization codes from being reused.

PKCE implementation requires computational resources that vary significantly across processor architectures. CPUs handle the cryptographic operations efficiently due to their complex instruction sets, while GPUs and TPUs may require specialized libraries to manage authentication workloads effectively.

Distributed tracing mechanisms have also evolved to support multi-architecture environments. Tools like the OpenTelemetry Collector now unify telemetry data into traces, logs, and metrics across CPU, GPU, and TPU deployments. This unified approach to system observability enables organizations to monitor performance across heterogeneous computing environments, providing critical insights into architectural bottlenecks and optimization opportunities.

Future Implications and Strategic Decisions

The architectural divide between CPUs, GPUs, and TPUs is likely to deepen as specialized computing demands continue growing. Organizations may increasingly adopt hybrid architectures that leverage each processor type's strengths within integrated systems.

Cloud providers are expected to offer more granular processor selection options, allowing applications to dynamically allocate workloads to the most appropriate hardware architecture. This evolution could lead to more cost-effective computing solutions and improved performance across diverse application portfolios.

As artificial intelligence, high-performance computing, and real-time analytics continue expanding, the strategic selection of processor architectures will become increasingly critical for competitive advantage. Organizations that understand these architectural trade-offs and align them with their specific workload requirements are positioned to achieve optimal efficiency, performance, and cost-effectiveness in the evolving computing landscape.